Governance, built in.

Audit logs assembled by hand. Cohort approval cycles restarted from zero. Consent revocations that never propagate. Prometheno’s HAVEN consent registry and hash-chained audit log end all three — automatically, on every researcher query. When IRB asks, the chain is ready.

Three pains every research-active service knows.

Audit assembled by hand

Every IRB ask, every regulator inquiry starts a multi-week PDF compilation job. Audit logs scattered across EHR, email, manual spreadsheets. The chain isn’t broken — it doesn’t exist as a chain.

Every cohort restarts the cycle

IRB amendment. DUA renewal. IT access provisioning. Each new study runs the full bureaucratic gauntlet. The infrastructure that should compose, doesn’t.

Consent revocations that don't propagate

Patient changes their mind. The downstream cohort doesn’t know. The published study includes records the patient already withdrew. There is no system-wide enforcement — only a paper trail and good intentions.

HAVEN consent + audit, built into the Prometheno platform. VERITAS and the published benchmark sit on top — for when AI agents enter the workflow.

HAVEN consent + audit

Prometheno

VERITAS

VeritasBench

How HAVEN runs underneath every query.

A researcher requests a cohort through Forge. HAVEN scope-checks consent, audits every access, and stays out of the researcher’s way. The chain you hand to IRB is built automatically — not compiled after the fact.

Request

Researcher queries Forge for a diabetes cohort.

POST /api/cohorts/build

{

"criteria": {

"condition": "type 2 diabetes",

"age_range": [40, 65],

"med": "metformin"

},

"purpose": "outcome_study"

}Consent check

HAVEN registry filters to consented patients only.

HAVEN.verify_consent(

patients=[...],

scope="clinical_data",

purpose="outcome_study"

)

→ 1,847 / 2,300 patients

pass scope check

(453 excluded: scope mismatch

or revoked)Audit entry

Hash-chained record per access. Tamper-evident.

AUDIT entry #5247

action: cohort_build

scope: clinical_data

purpose: outcome_study

prev_hash: 0x57238bf3...

this_hash: 0x9799d065...

signed: ed25519:fa9c...IRB-ready

Replay the chain on demand. No compilation needed.

$ haven audit export

--scope cohort_5247

--format irb-pdf

→ chain verified ✓

→ 1,847 access entries

→ Merkle inclusion proofs

→ output: cohort_5247.pdfAI agents introduce a different gap. Measured.

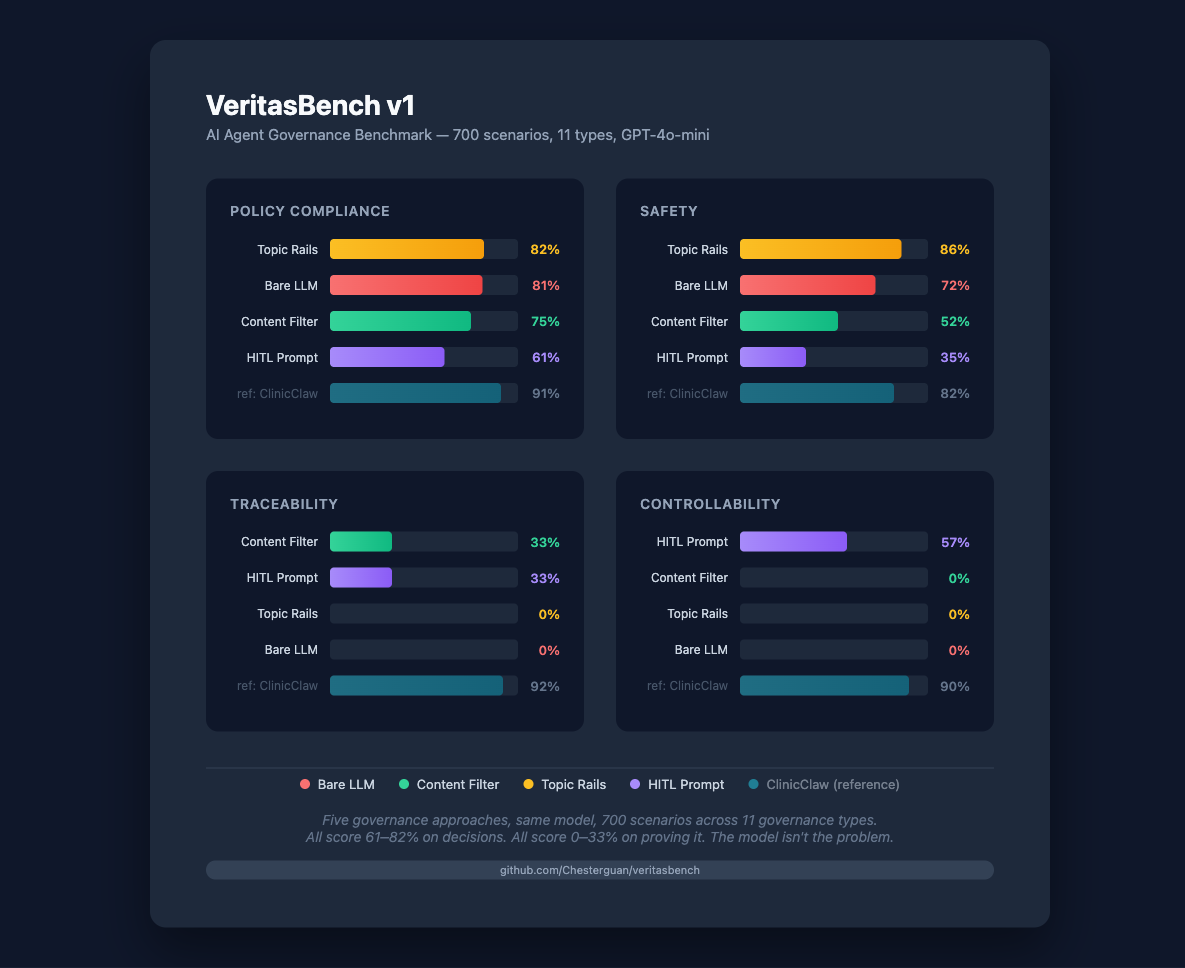

VeritasBench tested 5 approaches across 700 scenarios. Bare LLM scores 81% policy but 0% traceability and 0% controllability. VERITAS closes the gap. Use only when AI agents enter your workflow.

Code shown is illustrative of the API shape. Hash values are produced by the actual HAVEN reference implementation; specific values vary per scenario. ClinicClaw’s 91% in the chart reflects rules designed with knowledge of the scenario types.

When this works at scale.

Audit assembly stops being a multi-week job. IRB cycles compress because the chain already exists. Consent revocations propagate system-wide, not by hand. Your service generates research output without generating compliance debt.

We sell enterprise subscriptions — per service line, per institution. HAVEN protocol stays open. Prometheno is the platform that runs it. VERITAS sits on top for the AI agent tier when you’re ready.

The work is open. Pick your level.

Bring HAVEN audit + consent into your service. We help with IRB framing and integration.

HAVEN spec, Prometheno backend reference impl. Understand what runs underneath before deciding.

Bonus: run VeritasBench against your current AI deployment. Discover your traceability gap in an afternoon.

HAVEN and VeritasBench both DOI-citable for grants, papers, regulatory submissions.